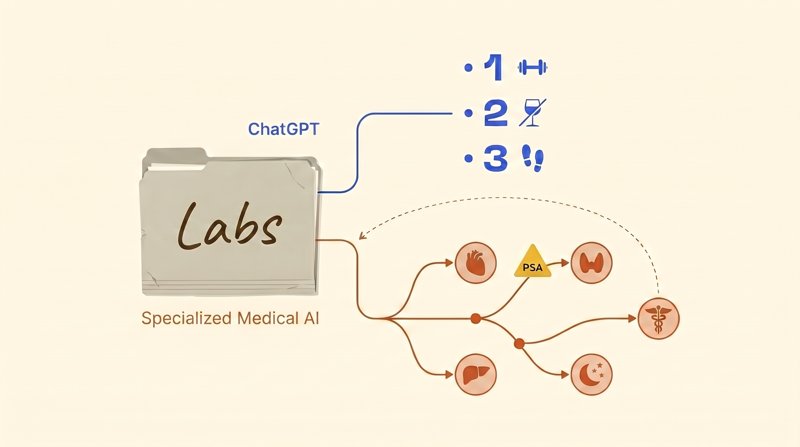

We loaded five clinical cases into ChatGPT and into Wizey — the two AI tools a patient today is most likely to open when they’re trying to make sense of a lab report — and asked each one to interpret the same labs. Both AIs reached the same diagnosis. What happened after that was unexpected, and it changes the question worth asking of the technology.

Two Answers for the Same Person

Forty-five-year-old man. One set of labs. One short prompt: “please interpret my labs.” Two services. Here’s what came back — verbatim.

ChatGPT: Do three things and most of the markers will normalize. 1) Lose 8-12 kg. 2) Alcohol: almost every evening right now. For the liver and insulin this is critical. 3) Physical activity: a minimum of 8,000-10,000 steps per day plus strength training three times a week.

Wizey, the specialized medical AI: Endocrinologist — to manage prediabetes, insulin resistance, and discuss testosterone replacement therapy. Cardiologist or internist — for cardiovascular risk assessment. Gastroenterologist or hepatologist — to confirm fatty liver disease. Sleep specialist or ENT — to evaluate for sleep apnea. Urologist-andrologist — for a deeper workup of sexual dysfunction. PSA testing — required before any discussion of testosterone therapy (standard screening for men 45 and over).

One person, one set of labs. Both AIs correctly read the picture — metabolic syndrome, insulin resistance, functional hypogonadism, suspected sleep apnea, vitamin D and zinc deficiency. The diagnosis matched. What diverged was what this man should actually do in the next two weeks.

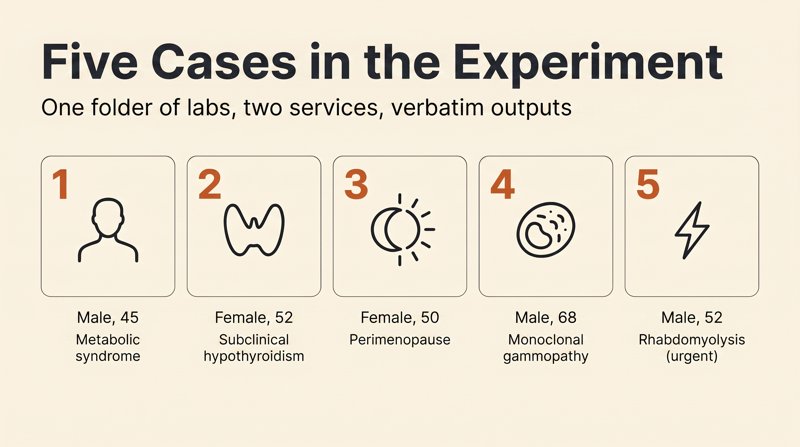

We’re the team behind Wizey. Going into this experiment, our working bet was that ChatGPT would miss the diagnosis. It didn’t miss once in five cases. The real finding was something else: a correct primary diagnosis is not the same as helping the patient. Across five cases we’ll show where the real line of difference runs — and we’ll name the one case where ChatGPT beat us on clinical substance.

How We Tested

- We assembled five clinical panels reconstructed from real published cases (PubMed, Blood, Annals of Family Medicine) — preserving every clinically meaningful abnormality and symptom.

- We loaded the identical panel into both services: ChatGPT (Plus tier, GPT-5.4) and Wizey, a specialized medical AI.

- For ChatGPT we typed a short prompt: “please interpret my labs.” No follow-ups, no prompt engineering. The goal was to mimic what an ordinary patient does holding a printout.

- All outputs were captured verbatim. Direct quotes in this article are unedited; places where we trimmed for length are marked with an ellipsis.

- Four cases are routine outpatient scenarios; one bonus case is urgent, included specifically to test triage behavior.

A note on scope

Five cases is a pattern illustration, not a statistic. We saw the same shape in each of the five, but we’re not presenting this as a thousand-run randomized study.

We’re the Wizey team — the conflict of interest is obvious. To partially offset it: the methodology was fixed before the runs, all outputs are quoted verbatim, and wherever ChatGPT beat us, we say so directly. The source publications from which each panel was reconstructed are named inline, so anyone can reproduce the experiment.

All tests were run on a single day, April 17, 2026.

What the Peer-Reviewed Literature Says

For readers who want the numbers before the case studies, here’s what the peer-reviewed literature reports about ChatGPT in lab interpretation:

- Cabral et al., PLOS ONE 2024: on specialized laboratory-medicine questions, ChatGPT interprets correctly in roughly 51% of cases, and 17% of answers are outright wrong.

- Nature Communications Medicine, 2025: when a false medical value is quietly inserted into the prompt context, LLMs “double down” on it in 83% of cases — that is, they accept the false value and build reasoning on top of it, never flagging the inconsistency.

- Nature Scientific Reports, 2025: on mixed acid-base disorders, ChatGPT returns a falsely reassuring “normal” verdict in 16.7% of cases; ICU physicians on the same cases show a 0% rate of false reassurance.

The numbers, to put it gently, are alarming. But there’s a wrinkle. In our five-case test, ChatGPT reliably produced the correct primary diagnosis — even in the cases where we expected it to stumble: subclinical hypothyroidism, MGUS (monoclonal gammopathy of undetermined significance), statin-induced rhabdomyolysis, the perimenopausal transition, metabolic syndrome with functional hypogonadism. Five out of five.

That’s the real pivot of this experiment. The difference isn’t “got it right or got it wrong,” as people tend to frame it. The difference is in what comes after the correct diagnosis. That’s the layer we’ll unpack next.

Case 1: Forty-Five, Tired of Being Tired

Forty-five-year-old engineer. Sedentary work, a major project under deadline, chronic stress. Complaints: persistent fatigue that a weekend off doesn’t fix, declining libido, weight gain, snoring, morning headaches, reflux two or three times a week, knee pain. Alcohol: a glass or two of wine nearly every evening, plus spirits on weekends. Family history: father has type 2 diabetes, mother has hypertension. The classic “I finally got around to running labs” middle-aged man.

Key panel abnormalities. HbA1c 5.9%. HOMA-IR (insulin resistance index) 4.9, nearly double the upper limit of <2.5. Triglycerides 2.4 mmol/L, LDL 3.6, HDL 0.95, ApoB 1.35, atherogenic index 5.1. ALT 58 U/L, GGT 78 — a non-alcoholic fatty liver disease (NAFLD) pattern. Free testosterone 220 pmol/L, below range. Vitamin D 18 ng/mL, zinc 9.4 μmol/L, B12 260 pmol/L, homocysteine 11.8. hs-CRP 4.8 mg/L, uric acid 468 μmol/L, cortisol 580 nmol/L. Waist circumference 104 cm (normal <94).

Parity on diagnosis. Both AIs produced the same list: metabolic syndrome, insulin resistance, prediabetes, atherogenic dyslipidemia, NAFLD, functional hypogonadism, vitamin D and zinc deficiency, chronic low-grade inflammation, hyperuricemia, suspected obstructive sleep apnea, high-normal cortisol. ChatGPT didn’t miss a piece of the clinical picture. It assembled every data point honestly.

Now — where the two diverged.

Referral routing. ChatGPT named zero specific specialists. The “where to go” block consisted of “emergency room” (reserved for rhabdomyolysis, not this case) and phrases like “usually prescribed” or “discuss with your doctor.” Wizey laid out five specialists with their specific responsibilities:

Endocrinologist — to manage prediabetes, insulin resistance, evaluate the need for pharmacologic lipid and glucose correction, and discuss testosterone replacement therapy if lifestyle changes are insufficient. Cardiologist or internist — for cardiovascular risk assessment. Gastroenterologist or hepatologist — to confirm fatty liver disease. Sleep specialist or ENT — to evaluate for sleep apnea. Urologist-andrologist — for a deeper workup of sexual dysfunction.

— Wizey

In most healthcare systems, seeing five specialists in two weeks is unrealistic — the PCP-as-gatekeeper referral path is more realistic, and even with private insurance, lining up five consults takes a month. But at least the patient knows who they’re seeing and why. “Discuss with your doctor” is a non-answer when typical endocrinology wait times run weeks to months; the patient just opens another AI chat. Or worse — starts self-treating. Or simply loses time that matters.

The safety layer — the biggest miss. ChatGPT flagged low free testosterone and explained the causes. That’s where it stopped. Wizey inserted this as its own line item:

PSA (prostate-specific antigen) testing — required before any discussion of testosterone therapy. Standard screening for men 45 and over.

— Wizey

This isn’t a nitpick. Testosterone replacement therapy (TRT) in the presence of undiagnosed prostate cancer can accelerate tumor progression — PSA screening before TRT initiation is written into most of the relevant guidelines (Endocrine Society, AUA, and others). A 45-year-old who reads ChatGPT’s output and goes looking for TRT at a boutique clinic without a PSA is a real clinical failure waiting to happen. The Endocrine Society’s testosterone therapy guideline is unambiguous on this.

Quantitative targets. ChatGPT: “Vitamin D3 4000-5000 IU, magnesium 300-400 mg, zinc 20-30 mg, omega-3 2-3 g, B-complex.” Wizey on the same question:

Vitamin D — at a serum level of 18 ng/mL, typical dosing is 2000-5000 IU/day to reach a target range of 40-60 ng/mL. Recheck in 2-3 months. Zinc — 15-30 mg as picolinate or citrate. Magnesium 300-400 mg as citrate or glycinate. Omega-3 (EPA+DHA) 1000-2000 mg. Berberine or inositol — discuss with your endocrinologist.

— Wizey

The difference is “how much to take today” versus “what level to reach, what form to choose, when to recheck.” Under the first prompt, the patient takes vitamin D and has no idea whether it’s working. Under the second, there’s a checkpoint.

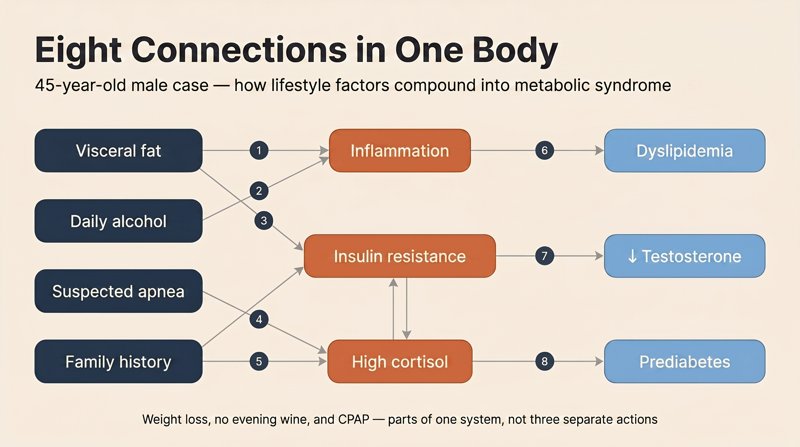

The mechanistic cascade. ChatGPT gave tables and bullet lists: here’s glucose, here’s insulin, here’s LDL. Wizey laid out eight numbered causal links:

1) Visceral fat isn’t just storage — it’s an endocrine-active tissue. It releases inflammatory signals, lowers insulin sensitivity, and converts testosterone to estradiol. 2) Insulin resistance → the pancreas produces more insulin → insulin stimulates hepatic triglyceride and LDL synthesis → dyslipidemia. 3) High cortisol → worsens insulin resistance and suppresses testosterone. 4) Daily alcohol → raises triglycerides and GGT, disrupts sleep. 5) Vitamin D and zinc deficiency → reduced testosterone synthesis. 6) Low free testosterone → loss of muscle mass → slower metabolism. 7) Snoring plus morning headaches → apnea → sleep fragmentation → cortisol + insulin resistance. 8) Family history — genetic background.

— Wizey

This is the mental map a good clinician holds in their head. With it, the patient stops being a passenger in their own physiology: weight loss, giving up the evening wine, and using CPAP for apnea are no longer three unrelated actions — they’re parts of a single system.

Emotional framing and prognosis. ChatGPT stuck to neutral clinical statement. Wizey added a short but important frame:

Good news: most of your issues are reversible… If you act now, the prognosis is excellent — everything can be turned around. Your situation is typical for a modern middle-aged man. The important thing is that you caught it in time. Many people only come in after they’re already diabetic, or after a heart attack.

— Wizey

This isn’t therapy. It’s prognostic information — “acting now matters, it isn’t too late” — plus orientation: “your case isn’t unusual.” For a 45-year-old staring at a panel with twenty abnormal markers, the difference between an emotionless diagnosis and a diagnosis plus the acknowledgment that recovery is still possible is psychologically significant.

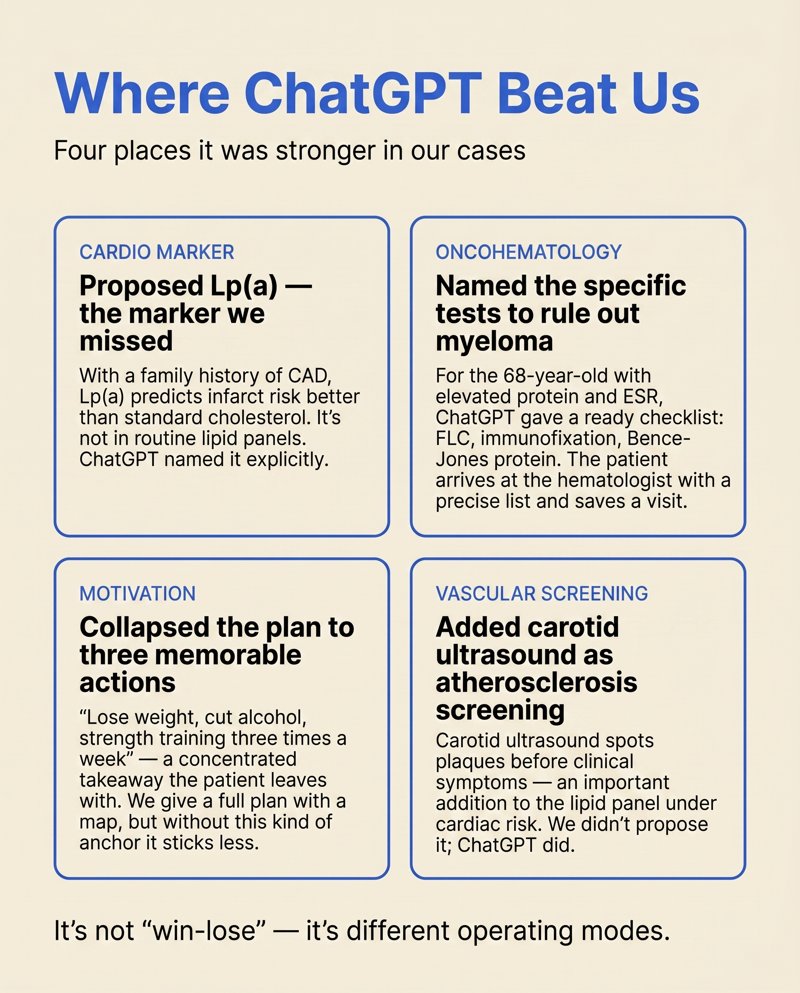

Where ChatGPT won. Lp(a) — with a family history of coronary disease, this is an important cardiac marker we didn’t mention. The condensed “three things to do” formula (weight, alcohol, activity) is memorable and motivating. Carotid ultrasound as an atherosclerosis screen. Real wins for ChatGPT in this case.

Case takeaway. Parity on diagnosis. Divergence on “what does this man actually do in the next 14 days?” ChatGPT gave a motivating three-action formula. Wizey gave five specialists with specific responsibilities, the PSA-before-TRT safety gate, quantitative targets with recheck intervals, a mechanistic cascade, a prognosis, and the reassurance that the patient was on time.

A correct primary diagnosis is zero minutes of actual help if there’s no referral path, no safety check, no target levels, and no “you’re on time” framing after it.

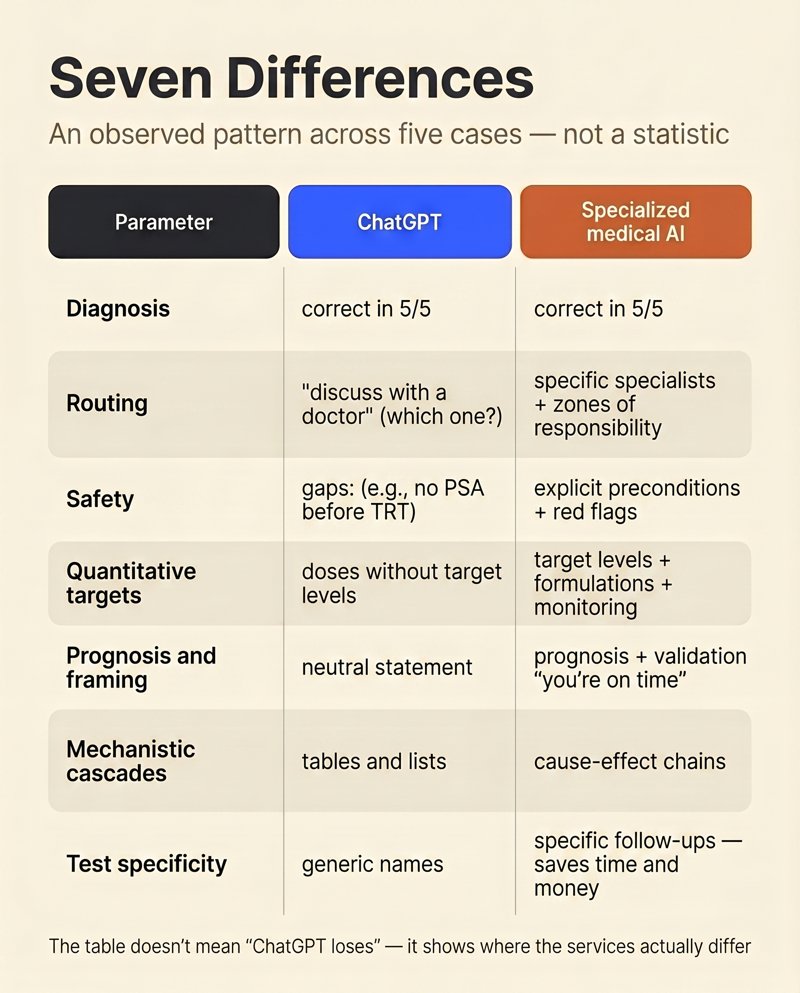

Seven Differences Between the Services

Not a statistic across a hundred cases — an observable pattern across five, repeated in each. We compared outputs line by line on seven parameters.

The table is not a statement that “ChatGPT loses.” It’s a map of where the two services diverge. On the quick “what does this even mean” question, ChatGPT is fast and good. On the plan of action before the doctor visit, it sags systematically. That isn’t a bug: GPT was trained on heterogeneous internet text, not on clinical patient-management algorithms.

Where ChatGPT Beat Us — Honestly

We ran the MGUS case (monoclonal gammopathy of undetermined significance) through both services — and here ChatGPT won on clinical substance. We promised in our methodology to name our misses directly. This is one of them.

Sixty-eight-year-old man. Complaints — generalized weakness for the past six months and intermittent back pain. On perindopril and atorvastatin 10 mg. Panel nearly normal except for three findings: total protein 92 g/L (elevated; normal 64-83), albumin 38 (normal), ESR 38 mm/hr (more than double the upper limit). Age 68 + six months of weakness + back pain + elevated total protein + elevated ESR = the classic “red-flag triad” for monoclonal gammopathy or multiple myeloma. It needs to be caught in screening.

What ChatGPT did better. It explicitly calculated the albumin/globulin ratio: total protein 92, albumin 38 → globulins 54, ratio 0.70 (normal >1.0). Conclusion: elevated globulins. From there it named a specific differential:

Three warning signs: ESR 38, total protein 92, and the symptom pattern (weakness plus back pain). This combination classically warrants ruling out multiple myeloma… Can also suggest: chronic inflammation, infection, rheumatologic disease, MGUS.

— ChatGPT

And it gave a specific workup: serum protein electrophoresis + immunofixation + free light chains (FLC) + Bence-Jones protein in urine + spinal X-ray/MRI + bone CT. That’s a real clinical checklist — exactly what a hematologist would order. It’s the difference between “workup for MGUS” and “workup for unexplained ESR.” According to recent NEJM AI coverage of GPT-4 on clinical cases, hematology-specific dd lists are an area where frontier models have improved noticeably.

What Wizey missed. We didn’t explicitly calculate the A/G ratio. We mentioned monoclonal gammopathies as a possibility but didn’t name the specific confirmatory tests — FLC, immunofixation, Bence-Jones. That’s a real product gap, not a subtlety of interpretation. The team sees it. Work is underway.

What Wizey still delivered. Despite the test-panel miss, the output wasn’t empty. It included something ChatGPT didn’t:

Prepare for your appointment: note when the weakness began, how often and where exactly your back hurts, whether you’ve had fevers, night sweats, or unintended weight loss.

— Wizey

That list — fever, night sweats, weight loss — is the classic “B-symptoms” screen for lymphoma and myeloma. A patient who arrives at a hematologist’s office with a two-line note reading “three episodes of night sweats over six months, about 3 kg unintended weight loss” saves the clinician twenty minutes of history-taking and improves the quality of the encounter.

Wizey also made a metacognitive note (“reference ranges weren’t printed on your report — I used internationally recognized norms”), gave a more precise urgency window (“1-2 weeks” versus ChatGPT’s “in the coming weeks”), and briefly commented on the current medications.

Editorial takeaway. This isn’t a win/loss — it’s two different operating modes. ChatGPT behaved like a clinician’s checklist in the patient’s hands: a list of tests to bring to a doctor. Wizey behaved like preparation for the doctor visit: B-symptoms, clearer complaint wording, a precise urgency window.

Both modes are legitimate. Missing the specific MGUS tests is a point where we criticize ourselves. But if the patient shows up at a hematologist with Wizey’s note and the hematologist orders FLC, immunofixation, and Bence-Jones themselves (which is their job), the twenty minutes saved on B-symptom history is worth a lot.

Two More Cases, The Same Pattern

Subclinical Hypothyroidism — Fifty-Two-Year-Old Woman

She presented with fatigue, weight gain, and dry skin. TSH 6.8 mIU/L (elevated; normal 0.4-4.0), free T4 and T3 within range. Total cholesterol 6.8 mmol/L, LDL 4.3. Both AIs correctly landed on subclinical hypothyroidism plus dyslipidemia. Primary diagnosis matched again.

They diverged in a single sentence. ChatGPT:

Current guidelines typically recommend starting treatment if: TSH >6-7, symptoms present, age <65. You meet all the criteria. Levothyroxine is usually prescribed at a low starting dose.

— ChatGPT

Superficially reasonable. Substantively, it’s a simplification with potentially harmful consequences. The American Thyroid Association and European Thyroid Association guidelines for TSH in the 4-10 mIU/L range actually say the opposite: the decision is individualized. Wizey captured that:

The decision to start treatment is individualized: TSH above 10 mIU/L generally warrants therapy; TSH in the 4-10 mIU/L range (as with you) depends on symptoms, presence of thyroid antibodies, comorbidities, and trajectory over time.

— Wizey

And it gave a sequence that ChatGPT skipped:

Repeat the lipid panel in follow-up — after thyroid function is corrected, cholesterol often normalizes on its own.

— Wizey

This is the pivotal detail. If a woman with TSH 6.8 and cholesterol 6.8 simultaneously starts levothyroxine and a statin, three months later she may be on a statin she doesn’t need: hypothyroidism by itself elevates cholesterol. Wizey added explicit routing (endocrinologist), a re-check of TSH and free T4 in 2-3 months, and an urgency window of “2-4 weeks.”

Case takeaway: both are right on the diagnosis. One nudges toward over-treatment and an unnecessary statin. The other builds a sequence.

The same pattern shows up in the next case, on a different topic — and the cost of oversimplification rises further.

Perimenopause — Fifty-Year-Old Woman

Patient: hot flashes, night sweats, insomnia, declining libido, “brain fog.” Family history — osteoporosis in her mother, coronary disease in her father. Panel: FSH 42 IU/L, estradiol 45 pmol/L (low), progesterone 1.2 nmol/L, anti-TPO 68 IU/mL (early Hashimoto’s), vitamin D 22 ng/mL, ferritin 32 μg/L, PTH 68 pg/mL, LDL 3.9 mmol/L. Both AIs landed on: perimenopausal transition + early autoimmune thyroiditis + vitamin D deficiency + latent iron deficiency + postmenopausal dyslipidemia.

They diverged on menopausal hormone therapy (MHT; older terminology is HRT, hormone replacement therapy — current usage prefers MHT). ChatGPT:

Menopausal hormone therapy. Very likely indicated. It reduces hot flashes, improves sleep, protects bone, improves lipids. Initiated by a gynecologist after evaluation.

— ChatGPT

Wizey opened the same topic into a decision frame:

Given your age (50), absence of contraindications, and pronounced symptoms — you’re in the “window of opportunity” for initiating MHT. Starting MHT within the first 10 years after the last menstrual period (you’re within that window) is associated with the greatest benefit: 80-90% reduction in hot flashes, improved sleep, mood, libido, bone and cardiovascular protection, prevention of genitourinary atrophy.

— Wizey

What matters isn’t only the “80-90%” number — it’s the safety gate ChatGPT skipped:

Pelvic ultrasound and mammography — to bring your screening current (last mammogram was two years ago, cytology three years ago). Contraindications must be ruled out before initiating MHT.

— Wizey

In much of Western patient culture, MHT discussions are either avoided or dominated by outdated WHI-era breast-cancer fears. A decision frame with an explicit window, a quantified effect size, and the precondition workup is rare — particularly for a woman whose gynecologist said “well, that’s your age, live with it.” Wizey also added a short emotional frame, without overreach:

Your symptoms aren’t “just aging” and aren’t something you have to accept. This is a manageable condition.

— Wizey

On quantitative targets, the same pattern as with the 45-year-old man. ChatGPT: “vitamin D 2000-4000 IU.” Wizey: target vitamin D 40-60 ng/mL, ferritin 50-100, elemental iron 40-80 mg in the morning on an empty stomach with vitamin C, calcium 1200-1500 mg, protein 1.0-1.2 g/kg body weight. The difference isn’t the dosing — it’s that the patient now has a checklist she can monitor against, not a general suggestion.

Case takeaway: the MHT conversation is a minefield of outdated information. A full decision frame here isn’t a bonus — it’s the baseline level of patient support.

What About an Urgent Case?

In the four outpatient cases, the main contrast was in routing, safety, and targets. But there’s another dimension you don’t see in routine scenarios — triage. A patient in a critical state should be calling emergency services, not opening an AI. In practice, they open the AI anyway. We ran one urgent case to see how both services handled it.

Fifty-two-year-old man. On atorvastatin 40 mg for 8 years for hypercholesterolemia, plus type 2 diabetes managed on metformin. Complaints: severe proximal muscle weakness (can’t lift his arms, can’t climb stairs), pain in shoulders and hips, dark urine for the past five days. Panel: CK 23,171 U/L (normal 30-200 — more than 115-fold elevated), AST 3,851, ALT 594, serum myoglobin 3,200, myoglobinuria positive, creatinine 188 μmol/L, eGFR 38 mL/min/1.73 m², potassium 5.3 mmol/L.

Both got it right. Diagnosis: statin-induced rhabdomyolysis with acute kidney injury. Etiology traced to atorvastatin. Recommendation: hospitalize. Both mentioned anti-HMGCR (HMG-CoA reductase antibodies) and anti-SRP — markers of statin-associated immune-mediated necrotizing myopathy (IMNM).

Beyond that, they split on three points.

Triage — the first line of the response. ChatGPT organized its answer into 12 blocks: markers, symptoms, causes, treatment. The phrase “go to the hospital immediately” showed up in the ninth block out of twelve — after a long wall of text. Wizey opened the same case differently:

Critical situation — immediate hospitalization required. Your labs indicate severe acute muscle tissue damage (rhabdomyolysis) that threatens kidney function and requires immediate medical care. This is a medical emergency.

— Wizey

And later in the urgency block:

CRITICAL urgency — hospitalization required within hours… If you feel sudden deterioration (severe weakness, irregular heartbeat, decreased urine output, confusion) — call emergency services.

— Wizey

In an emergency, the first line of the response determines the outcome. If the patient sees a 12-block analysis with “go to the hospital immediately” in block nine, they may lose 10-15 minutes to reading. The placement of triage is the difference between “called emergency services” and “read to the end.”

Clinical reasoning — the AST/ALT calculation. AST 3,851 and ALT 594 are easy to misread as severe hepatic injury: transaminases through the roof, therefore liver. Wizey did the explicit math:

AST/ALT ratio = 6.5 (normal is roughly 1). This degree of AST dominance over ALT is typical for muscle injury, not hepatic injury.

— Wizey

Without that calculation, the patient could be terrified of “liver catastrophe” — when the source of the transaminases here is muscle. ChatGPT mentioned the pattern in general terms, but didn’t compute the ratio.

Answering “why now?” The patient had been on atorvastatin for 8 years. Why did rhabdomyolysis happen right now?

Rhabdomyolysis may have been triggered by: subtle dehydration, drug interactions, or cumulative statin exposure on a background of worsening renal function (reduced kidney clearance → rising statin levels).

— Wizey

ChatGPT skipped this question. It’s an important one — it’s the reason continuing the statin after recovery is not an option.

Post-hospital plan. ChatGPT’s was essentially nonexistent: stop the statin, fluids, monitoring. Wizey’s was a full discharge plan: hydration 2-2.5 L/day, protein restriction to 0.8-1 g/kg, potassium restriction, avoid NSAIDs, CoQ10 100-200 mg/day, SLCO1B1 genotyping, statin alternatives (ezetimibe, fibrates, PCSK9 inhibitors), renal function and CK monitoring at minimum every 3 months for the first year.

Case takeaway. In routine scenarios, triage is about tact. In emergencies, it’s clinical responsibility. The difference in the first line, in the ratio calculation, and in the discharge plan is the difference between “information” and “navigation.” To be absolutely clear: in this kind of situation, the patient should call 911 / emergency services — not open an AI.

Limitations of the Experiment

We promised methodological transparency. Here’s what’s weak about what we did.

Five cases is a pattern illustration, not a statistic. Evidentiary conclusions need hundreds of runs, ideally with blinded raters and a pre-registered methodology. We saw the same pattern in each of the five, but we acknowledge the sample is too small. A sophisticated reader is entitled to say “show me 200 cases.” It’s a fair demand.

On MGUS we missed the specific confirmatory tests — FLC, immunofixation, Bence-Jones. That isn’t “didn’t understand the context” — it’s a real product gap. The team knows it, and work on better retrieval of clinically relevant fragments for specific panel patterns is in progress.

“Lost in the Middle.” We initially expected that in the statin-rhabdomyolysis case — a panel of ~70 markers with atorvastatin buried mid-list among eight medications — ChatGPT would fail to connect the myopathy to the statin. That didn’t happen: the model correctly tied atorvastatin to the elevated CK. It’s possible the effect appears on larger panels (150+ markers), which we didn’t test. The hypothesis is unverified.

One model, one version. We tested ChatGPT on GPT-5.4 — a specific snapshot in time. We didn’t test other public LLMs on these cases. Results may differ.

Conflict of interest. We’re the Wizey team. To limit it: the methodology was fixed before the runs (case list, prompt, services), and all outputs are quoted verbatim.

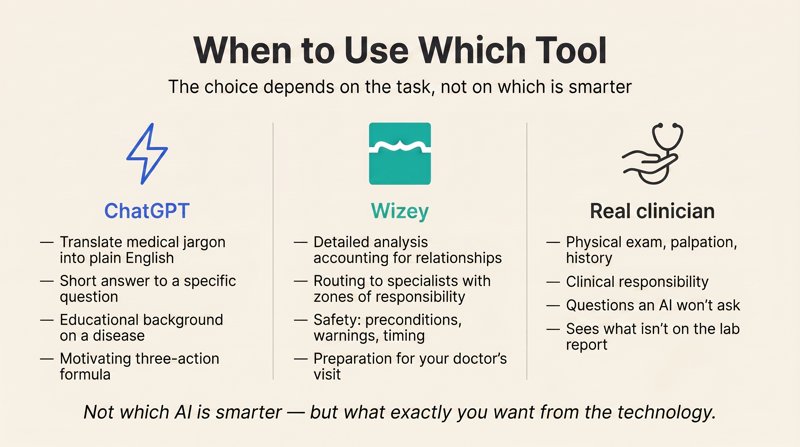

When to Use Which Tool

The conclusion isn’t “use Wizey.” The conclusion is that the choice depends on the task.

ChatGPT is genuinely good for:

- Translating medical language into plain English (“what does HOMA-IR actually mean?”)

- Short answers to a focused question (“what is ferritin?”)

- Educational background on diseases, especially rare ones

- The motivating “three things to do now” formula — a concrete first step

A specialized medical AI is good for:

- A structured read of a full laboratory panel

- Routing to specialists with specific responsibilities

- The safety layer — preconditions before therapy, warning signs, urgency windows with timing

- Quantitative targets — what level to reach, what formulation to choose, when to recheck

- Visit preparation — how to frame complaints, what questions to ask, what to bring to make the appointment efficient

A real clinician is still necessary. No AI can do the physical exam, palpation, or carry clinical responsibility for the patient. A good clinician can still ask questions the AI hasn’t learned to generate, and sees what isn’t on the lab report. But arriving prepared — with a referral map, well-framed complaints, and a list of questions — is dramatically better than arriving confused with a stack of results.

The question isn’t “which AI is smarter.” The question is what you specifically want from the technology: a quick answer, or a structured analysis of your particular situation.

Mini-FAQ

Why didn’t ChatGPT miss the primary diagnosis in any of the five cases? The primary diagnosis is the most likely hypothesis given a panel, and on common clinical patterns — metabolic syndrome, subclinical hypothyroidism, rhabdomyolysis — LLMs genuinely do well. The problem isn’t the diagnosis itself. It’s what happens after: routing patients to the right specialists, safety gates before therapy, target levels and monitoring intervals, clinical calculations like the AST/ALT ratio.

What is a “safety layer” and why does it matter in lab interpretation? A safety layer is the set of preconditions and screenings that must happen before any therapy is started — for example, PSA before testosterone replacement, or mammography and pelvic ultrasound before menopausal hormone therapy. ChatGPT consistently skipped these gates, not because it “doesn’t know,” but because it was trained on heterogeneous internet text, not on clinical patient-management algorithms.

You only tested ChatGPT. What about Claude, Gemini, or other models? Only ChatGPT in this experiment — GPT-5.4 on the Plus tier. We didn’t run the same cases through other LLMs. We’ve published the full input panels and verbatim outputs, so anyone who wants to repeat the experiment on other models can compare results directly. We’ll link your writeup if you send it.

Five cases isn’t much. Where’s the evidence at scale? Fair question. Five cases is a pattern illustration, not a statistic. We saw the same shape in each of the five, but we’re not presenting this as a randomized study. Evidentiary conclusions need hundreds of runs with blinded raters and a pre-registered methodology — that’s the next phase the team is working on.

Where’s the raw experiment data? The complete input panels and verbatim outputs from both services across all five cases are published as a separate companion page at /blog/2026/04/17/wizey-vs-chatgpt-raw-experiment-data/. The reproduction methodology is there too, if anyone wants to run the same patients through other models.

The Bottom Line

Correct diagnosis is the easy part. What comes after — the referral map, the safety gates, the target levels, the prognosis, the triage — is where the real work of patient care happens. That’s the layer where general-purpose LLMs consistently sag, and the layer where specialized medical AI is built to live.

If you want a tool designed specifically for this kind of multi-panel lab interpretation, that’s what we’re building at Wizey. It’s not a substitute for a clinical consultation — it’s meant to help you arrive at one prepared. The complete raw outputs from both services across all five cases are published openly, along with the reproduction methodology, in case anyone wants to run the same patients through a different model.